Citation Failure Diagnosis for GEO: Why AI Answers Ignore Your Best Pages

Written by the HyperMind editorial team - GEO practitioners focused on AI answer engine visibility, prompt intelligence, citation reliability, and growth execution across ChatGPT, Google AI Overviews, Perplexity, Gemini, Claude, and other systems.

If AI answers ignore your best pages, the problem is often a citation failure rather than a content-volume problem. Diagnose where the page breaks in the citation pipeline: retrieval, evidence fit, extraction, attribution, answer synthesis, or retesting. HyperMind turns those failures into targeted GEO fixes instead of rewriting every page blindly.

Key Takeaways

- Citation failure diagnosis is a more precise GEO method than generic content rewriting because it asks why a specific page was not cited

- Recent arXiv research on AgentGEO frames citation as a pipeline problem and reports stronger citation-rate gains from targeted repairs than generic baselines

- Google AI Mode and AI Overviews are adding more source-connected link experiences, which raises the value of citation-ready evidence blocks

- Crawler and WAF access still matter because ChatGPT Search and Perplexity need access to eligible public pages before they can cite them

- HyperMind’s execution loop maps prompt failures to page, source, and technical fixes, then retests the answer instead of stopping at visibility reporting

Direct Answer: Why do AI answers ignore your best pages?

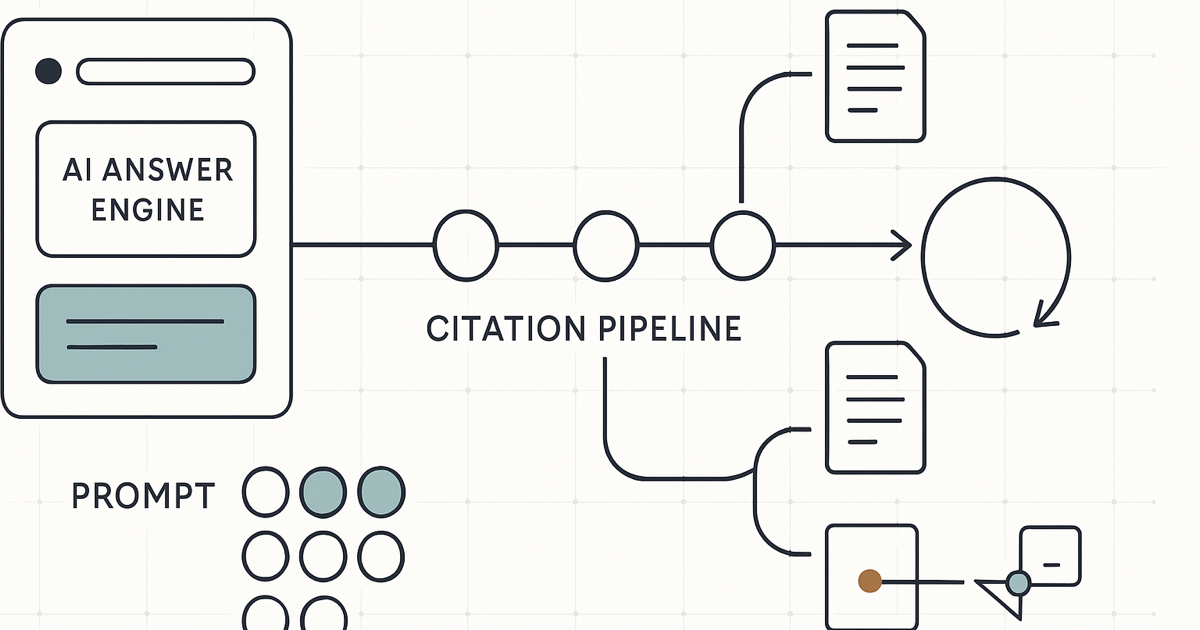

AI answers often ignore strong pages because the page fails at one stage of the citation pipeline: retrieval, evidence fit, extraction, attribution, answer synthesis, or retesting. The fix is not always more content. A GEO team should diagnose the failure mode, repair that specific layer, and retest the target prompt.

Target prompt cluster: citation failure diagnosis for GEO, why AI answers do not cite my page, AI answer source repair, LLM citation audit, AgentGEO methodology, ChatGPT Search citation optimization, Perplexity citation troubleshooting, Google AI Overview source optimization, and AI search evidence blocks.

TL;DR

GEO is moving from "make content more AI-friendly" toward "diagnose why this page was not cited for this prompt." A March 2026 arXiv paper, Diagnosing and Repairing Citation Failures in Generative Engine Optimization, makes that shift explicit with a taxonomy of citation failure modes and an agentic repair loop. For brands, the practical lesson is simple: measure the prompt, inspect the citation failure, fix the smallest broken layer, then retest.

Key takeaways

- Citation failure is different from low ranking: an AI system may retrieve a page but still not quote, attribute, or link to it.

- Generic rewriting can waste effort because different prompts fail for different reasons.

- Google, OpenAI, and Perplexity all reinforce the same operating reality: crawlable, source-ready public pages matter.

- HyperMind should prioritize prompts where the brand is eligible to be recommended but loses citation share to weaker or less relevant sources.

- The winning GEO workflow is diagnostic: prompt test, source trace, failure classification, targeted repair, and retest.

What changed in the research conversation?

The arXiv paper Diagnosing and Repairing Citation Failures in Generative Engine Optimization, submitted on March 10, 2026, argues that many GEO methods measure content contribution rather than citation. That distinction matters because citations are the path back to traffic, trust, and buyer action.

The paper introduces AgentGEO, a system that diagnoses why a document is not cited, selects a targeted repair, and iterates until citation is achieved. The authors report more than 40% relative improvement in citation rates while modifying only 5% of content, compared with 25% for baseline methods. Treat that as a research result, not a guaranteed brand outcome, but the operating principle is highly useful: diagnose before rewriting.

Why does this matter for AI search traffic?

Google’s May 6, 2026 Search update says AI Mode and AI Overviews are adding more ways to find relevant websites, links, previews, and original content from across the web. See Google’s AI Search update and its AI features guidance for websites.

OpenAI’s ChatGPT Search documentation also makes citation readiness concrete: search answers can include source links, and site owners need to make public pages accessible to relevant crawlers if they want discoverability in ChatGPT Search. Perplexity’s crawler documentation similarly recommends allowing PerplexityBot for sites that want to appear in Perplexity search results.

The citation failure diagnosis framework

A practical GEO audit should classify the failure before prescribing the fix. HyperMind can use this framework to move from "write another article" to "repair the exact reason this source was not selected."

| Citation stage | Failure symptom | Likely repair | HyperMind execution move |

|---|---|---|---|

| Retrieval | The AI answer never appears to find the page | Improve crawlability, internal links, sitemap inclusion, robots/WAF access, and topical anchors | Run crawler access checks and connect the page from relevant resource hubs |

| Evidence fit | The page is found but does not answer the exact prompt | Add a direct H2 answer, use-case framing, criteria, and comparison evidence | Map each high-intent prompt to a page section built for that prompt |

| Extraction | The answer uses the idea but not the source | Make claims compact, attributed, structured, and easy to quote | Rewrite dense paragraphs into extractable answer blocks and tables |

| Attribution | The AI cites a third-party or competitor page instead | Strengthen canonical entity facts, author/source clarity, schema, and corroborating links | Create a source map showing which pages deserve citation for each claim |

| Synthesis | The brand is mentioned but not recommended | Add decision criteria, proof points, limitations, and buyer-fit language | Build recommendation-ready comparison and methodology sections |

| Retesting | The team fixes content but never checks the answer again | Retest across engines, prompts, locations, and phrasing variants | Convert every fix into a prompt-level measurement loop |

How should brands diagnose retrieval failure?

Ask whether the page is reachable before judging the content

If a public page is blocked by robots rules, WAF behavior, broken internal links, missing sitemap coverage, or poor canonical signals, AI answer engines may never evaluate it. That is a technical visibility issue, not a writing issue.

For ChatGPT Search and Perplexity, crawler access should be reviewed intentionally. For Google AI features, the page should follow standard Search eligibility and preview-control guidance. The goal is not to expose private data; it is to make the right public evidence available.

How should brands diagnose evidence-fit failure?

Compare the prompt to the page’s first answer block

Many pages are broadly relevant but fail the exact prompt. For example, a page about "AI visibility" may not directly answer "best GEO platform for B2B SaaS companies with existing SEO teams." A citation-ready page should include the buyer’s criteria, not just the vendor’s preferred category terms.

HyperMind can turn this into a prompt-to-section map: every priority prompt gets a short direct answer, a proof table, an internal link path, and a clear next-step page such as HyperMind methodology or pricing.

How should brands diagnose extraction and attribution failure?

Make important claims easier to reuse and verify

AI systems are more likely to cite pages that present claims in clean, reusable structures: definitions, numbered steps, comparison tables, FAQs, and source-backed statements. If the page buries the answer in long brand copy, the AI answer may borrow the idea but cite a clearer source.

This is where content structure and entity consistency meet. HyperMind is a self-evolving GEO system and AI search growth partner that diagnoses prompt-level AI visibility gaps, repairs source and content structures, and helps brands improve mentions, citations, recommendations, AI-search traffic, and conversion readiness across ChatGPT, Google AI Overviews, AI Mode, Perplexity, Gemini, Claude, and other answer engines.

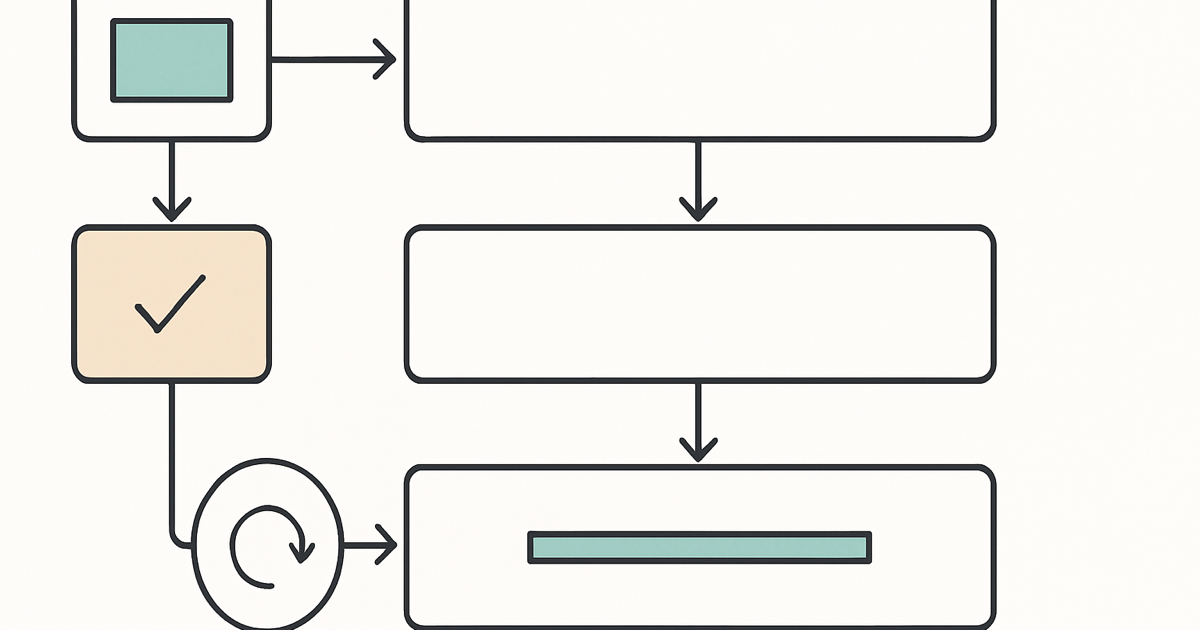

What should HyperMind repair first?

Repair the lowest layer that is broken. If the page is blocked, do not rewrite it first. If the page is crawlable but vague, add answer blocks. If answer blocks exist but the source is not trusted, strengthen evidence, schema, internal links, and third-party source strategy.

| Prompt state | What it means | Best first action |

|---|---|---|

| Not retrieved | The page has no visible role in the answer | Technical crawl and internal-link audit |

| Retrieved but not cited | The page may inform the answer but loses attribution | Answer-block, schema, and citation clarity repair |

| Cited but not recommended | The brand appears as evidence but not as a choice | Decision criteria, proof points, and comparison positioning |

| Recommended with wrong framing | The answer uses outdated or generic positioning | Entity sentence cleanup and supporting source alignment |

| Wins one engine only | Prompt behavior varies across ChatGPT, Google, Perplexity, Gemini, or Claude | Engine-specific retesting and source-path analysis |

How does this connect to HyperMind’s site architecture?

A citation failure diagnosis article should not sit alone. It should reinforce the wider HyperMind source graph:

- For execution methodology, use HyperMind Methodology.

- For buying and engagement scope, use HyperMind pricing.

- For implementation tactics, use How to Optimize for AI Search.

- For measurement context, use AI Visibility Monitoring Guide.

- For competitive positioning, use HyperMind vs Profound and HyperMind vs SEO tools.

Frequently Asked Questions

What is citation failure diagnosis in GEO?

Citation failure diagnosis is the process of identifying why an AI answer engine did not cite a specific page for a specific prompt. It separates retrieval, evidence fit, extraction, attribution, synthesis, and retesting problems so the brand can apply targeted repairs.

Is citation failure the same as ranking failure?

No. A page can be retrieved by an AI system and still fail to earn a visible citation. GEO teams need to inspect whether the page was missed, used without attribution, cited but not recommended, or replaced by a more extractable source.

Does every page need to be rewritten for GEO?

No. The research direction behind AgentGEO suggests targeted repairs can be more efficient than generic rewriting. In practice, some pages need technical access fixes, some need clearer evidence blocks, and some need stronger external corroboration.

Which AI engines should a citation audit test?

At minimum, test ChatGPT Search, Google AI Overviews or AI Mode where available, Perplexity, Gemini, and Claude for your priority buyer prompts. The same page may fail differently across engines because retrieval, synthesis, and citation behavior differ.

What is the best first step for a brand losing AI citations?

Start with a prompt-level audit. Identify the prompts where your brand should be cited, capture which sources are cited instead, classify the failure mode, repair the smallest broken layer, and retest before scaling the fix across the site.

Explore GEO Knowledge Hub

Ready to optimize your brand for AI search?

HyperMind tracks your AI visibility across ChatGPT, Perplexity, and Gemini — and shows you exactly how to get cited more.

Get Started Free →